This is one of those SEO tracks that have been on-repeat throughout my career as a freelance SEO.

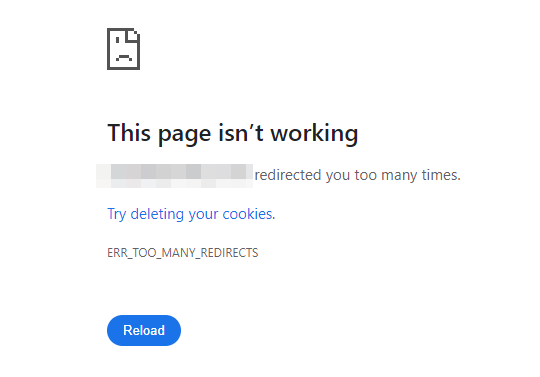

You're inspecting a page URL in Search Console (or, doing a good old-fashioned "fetch and render" in Google Webmaster Tools - if you've been around the block a bit) - and shock horror, you're met with a "broken" page render.

This happened heavily with the Mobile Friendly Test in Google - I'd often be hit with automated alerts from Google Search Console, warning that a client site is failing the test. Digging in further, I'd find the broken CSS file was the cause here.

When it happens, it does look terrible in the viewport - often because the external style.css file (naming convention may vary) wasn't accessible to Googlebot at that point of the inspect/render, for whatever reason.

For those who want to brush up on what CSS can do, this W3Schools article covers it nicely.

The question that was always on my mind; is this actually an issue? Or is it just a glitch in the Matrix - a "feature" of Search Console's rendering process?

How to determine if the broken render is causing an SEO issue?

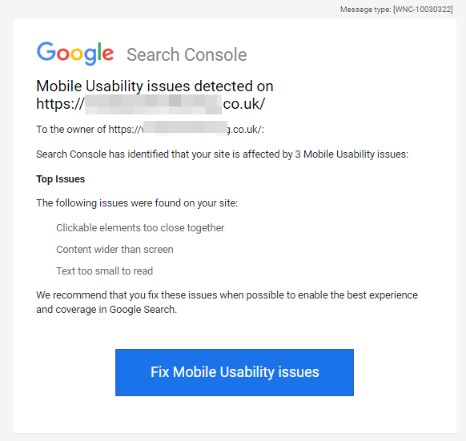

So the email subject line from Google warning of this error isn't the most subtle:

New Mobile Usability issues detected for site

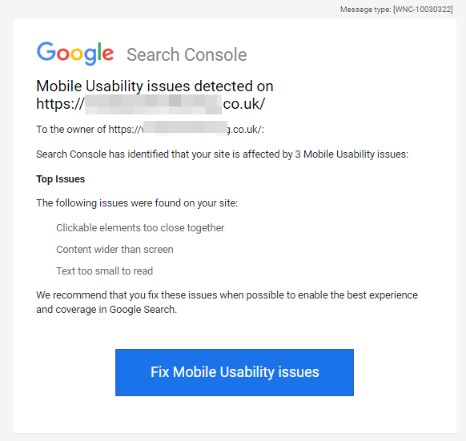

I can imagine many clients, other stakeholders who have GSC access would panic a bit at receiving a message like that. "I thought our website was 100% responsive?! " - cue angry email/phone call to their SEO/web developer.

I don't think the detection system from Google is the best. At first I was worried when receiving an alert like this. But often after further digging it would turn out to be a false-positive in many cases.

I do still like to check things to be on the safe side - and recommend you do too. Anecdotally I feel like these emails have stopped, or at least calmed down, so maybe Google did make some adjustments with this message.

The contents of the email go on to state the following:

To the owner of [redacted]:

Search Console has identified that your site is affected by 3 Mobile Usability issues:

Top Issues

The following issues were found on your site:

Clickable elements too close together

We recommend that you fix these issues when possible to enable the best experience and coverage in Google Search.

When we look into each of those issues, they're all caused by the same problem - broken external CSS file. When that breaks, the page formatting goes a bit awry and everything overlaps. Text becomes too small on mobile devices, screen viewpoints are broken, and so on.

Checking if the Mobile Usability issues are causing you problems

I've tried to get to the bottom of this in a few ways. I had some great feedback on the Google Webmaster Forums.

User BarryHunter (who seems to spend a great deal of time helping others out on the forums) said the following, when I asked about the temporarily unavailable resources error in Search Console, and the broken render (that was a particular error I was getting from the Inspect URL tool):

"Other Error" (or "Temporarily unreachable" in Fetch as Google) is generally a euphemism for "Not Enough Fetch Quota Available" in my experience.

... Google has a quota per site, for the number of requests its WILLING to make to the particular server. Partly to avoid an accidental DOS (ie if Google made requests without restraint it could quickly overwhelm most servers, Google has more servers than most sites!), but also as a 'resource sharing' system, to avoid devoting too much bandwidth to any particular site, and then not being able to index other sites.

https://webmasters.googleblog.com/2017/01/what-crawl-budget-means-for-googlebot.html

So some requests 'fail' because Google proactively aborted the request - without contacting the server - because it WOULD potentially push it over quota

This quota is 'used' up by Googlebot making normal crawl activity, and when use the various testing tools. So the amount of quota available at any moment will vary a lot - depending on recent activity. ... which ones happen to succeed and which ones blocked in any particular 'attempt' is effectively random.

So the quota value is selected by Google; can't make Google allocate more resources to crawling your site (other than making haven't already manually restricted it in Site settings!)

... but 1) can check if Google is generally wasting crawl quota, crawling 'useless' pages (or resources); pruning nonessential stuff, would allow more crawling of the 'good' stuff.

and 2) see if can make pages more efficient, and use LESS resources. Less resources wont exhaust the quota as quick!

finally 3) if the failures are causing intermittent 'fails' in the test, its because the page doesnt cope well with missing resources. (e.g. there is 'critical' CSS in a skipped file), so maybe can rework the page to work despite not having all resources loaded. E.g. inline the very critical CSS into the main page, so doesnt matter if the 'full' css file is missed.

Note 'Other Error' CAN also be caused by issues with the hosting server, connection timeouts, 503 http status etc, but in general I think the above explanation is most likely. Just don't rule out issues with origin server based on my description above.

He (barryhunter) also went on to clarify:

The limit is actually imposed Googles end . Although if Google thinks the host can't 'cope' it does lower the crawl rate! Basically if it 'ramps' up the number of pages and it seems to correlate with the host failing to serve, it determines that perhaps the rate is not sustainable by the host. Add hence keeps the rate lower. So can check with host can cope with the request levels. If there are some resources that can be removed, that may well be worthwhile, save bandwidth and make pages load quicker 🙂

From my own reviews and digging, this was the best answer I could find to the particular issue I was having with temporarily unavailable resources.

Other steps I take to did deeper into the issue:

- Bit of a Homer Simpson one but check that the site does indeed load OK in your browser first of all!. Check a few browsers, and a few devices. Chrome dev tools is handy for this too, to emulate other devices.

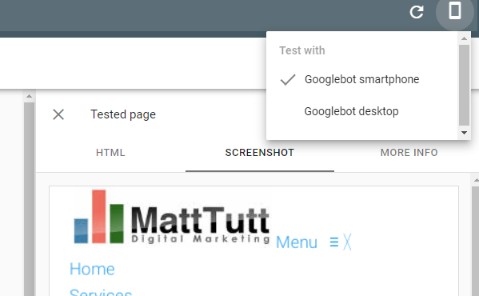

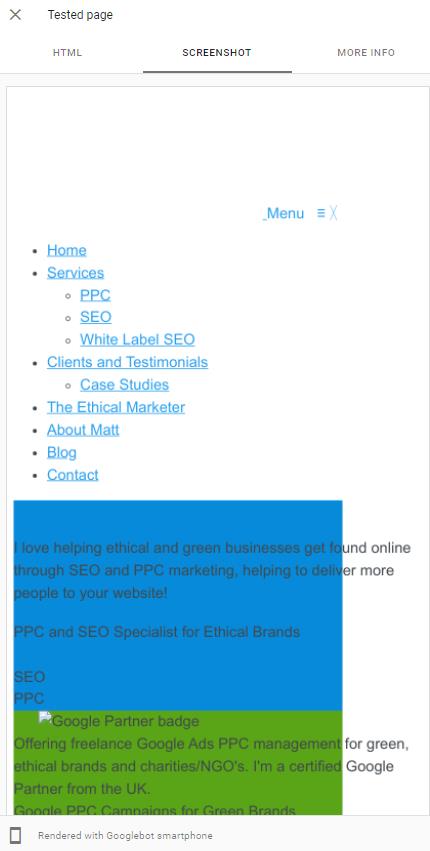

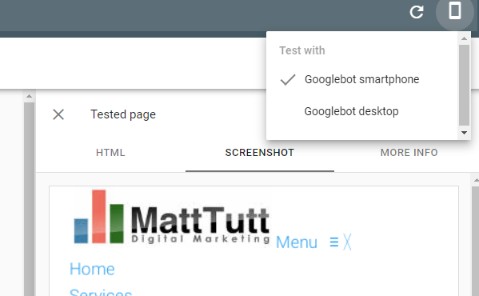

- If I don't have access to Search Console I'll put the page through the Rich Snippets Testing tool. This tool has very recently been upgraded so it now shows a render option for desktop and smartphone devices - something that wasn't possible before (smartphone view was only supported).

- From this tool I'll check the HTTP response if I do see an issue, as well as checking the Page resources (under the More Info tab) to see exactly what couldn't be loaded. It's normal to get lots of ad scripts returned here, and if on WordPress various plugins that might be blocking crawlers and aren't a required part of the render.

- Sometimes I might take the HTTP response that is returned, and then in Chrome (a new incognito tab) I'll paste this code into the DOM, to "render" the page from my browser. I could pretend to be some kind of evil genius, but it was actually @ohgm (an actual evil genius) who made me aware this was possible. Previously I just copy/pasted it into notepad and saved as a html file 😕

What this is doing is allowing you to see a scrollable render of the page. Otherwise you're limited to seeing that initial snapshot of the page, either on desktop or mobile using the Rich Snippets test. - If I'm still curious or undecided I might crawl with JS rendering enabled in Screaming Frog, and Googlebot UserAgent selected, just to get another opinion (I wouldn't suggest this as being the most reliable option; I'd much rather use a Google tool to check this if I can).

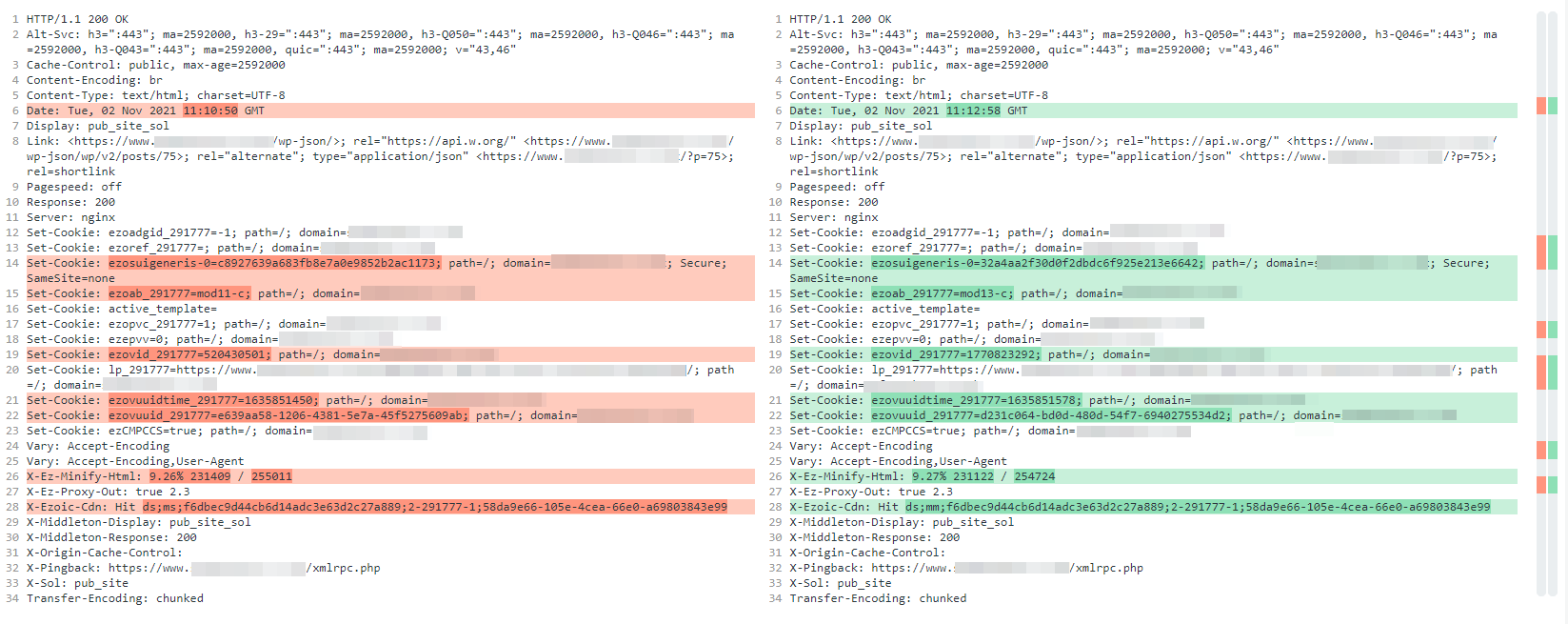

- This is important but I'll run the Google tests a few times to get a decent sample of data. It's happened to me that the render is fine one moment, and then broken the next, where seemingly nothing has changed. In these instances I'll copy-and-paste the HTTP response for the "good" render, and paste into a tool like Diffchecker.com. I'll then repeat the test to catch a "broken" render, do the same, and then find the difference between the two responses. This can help to pinpoint the issue.

using DiffChecker to highlight differences in the HTML response - Try and get your hand on server logs. Can you check to see if those CSS files have been found/received OK when filtered to Googlebot? If you can see that the likes of Googlebot are getting 4xx or 5xx status codes here, that'd likely be a big red flag.

- What does the tech stack on the backend of the site look like? Are there CDN's in use, Cloudflare, or other systems that could be at fault. Could Cloudflare unknowingly be blocking the access here?

So - is a broken render an issue or not?

It's hard to say for sure without digging into the issue, as any fairly competent SEO/web developer would do. It's also worth noting to what extend the render is broken - and then figuring the root cause.

This article has touched upon a broken render caused by the CSS file that controls the page styling, but there are lots of other ways a render could break.

It might be from a piece of JavaScript on the page (maybe one of those beautiful carousels we all love?) which is causing the render to look different than it would in your browser. It could also be from a rogue plugin that's misbehaving within WordPress.

Those might be easier to detect and resolve, but they're all going to be worth having a quick review.

Some further reading on resolving rendering issues:

https://www.searchenginejournal.com/identify-reduce-render-blocking-resources/316365/

Help Fixing the Mobile Friendly Issue in Search Console

If you think your website is having issues due to failing the mobile friendly issue in Search Console and you'd like to find an SEO consultant to help diagnose further, don't hesitate to reach out for a chat.