Recently I stumbled across some strange SERPs when doing some competitive SEO research for a client I work with in the education sector.

I'd noticed within the Ahrefs tool that some sites were ranking for a huge number of keywords and it seemed that traffic was way over-inflated based upon the size of their brand and the strength of their content.

It didn't take long to realise that actually what was going on was that the sites in question had been compromised at some point and that spam content was being injected.

This is quite commonly known as a Japanese or Chinese content hack, as often it is content created in those languages.

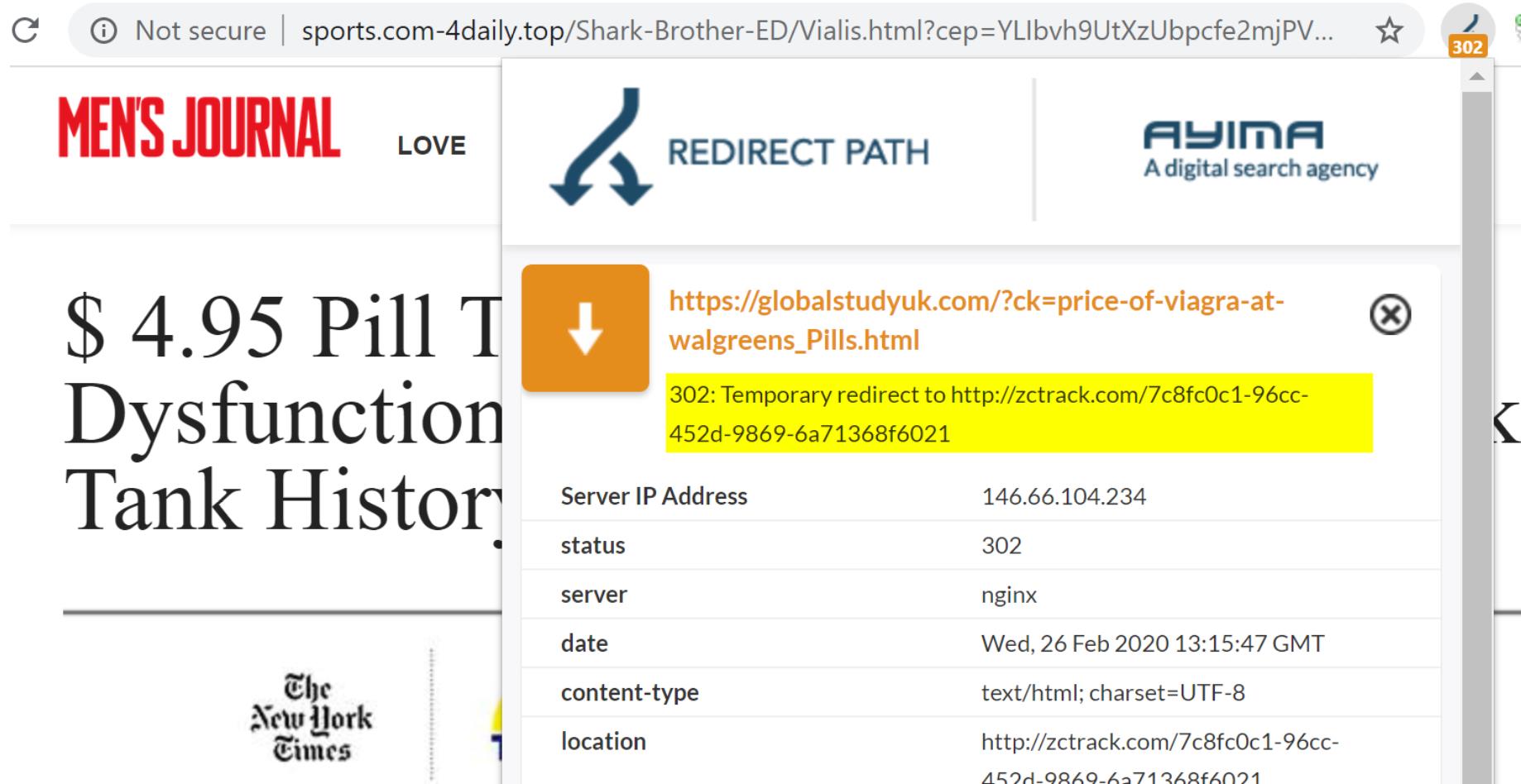

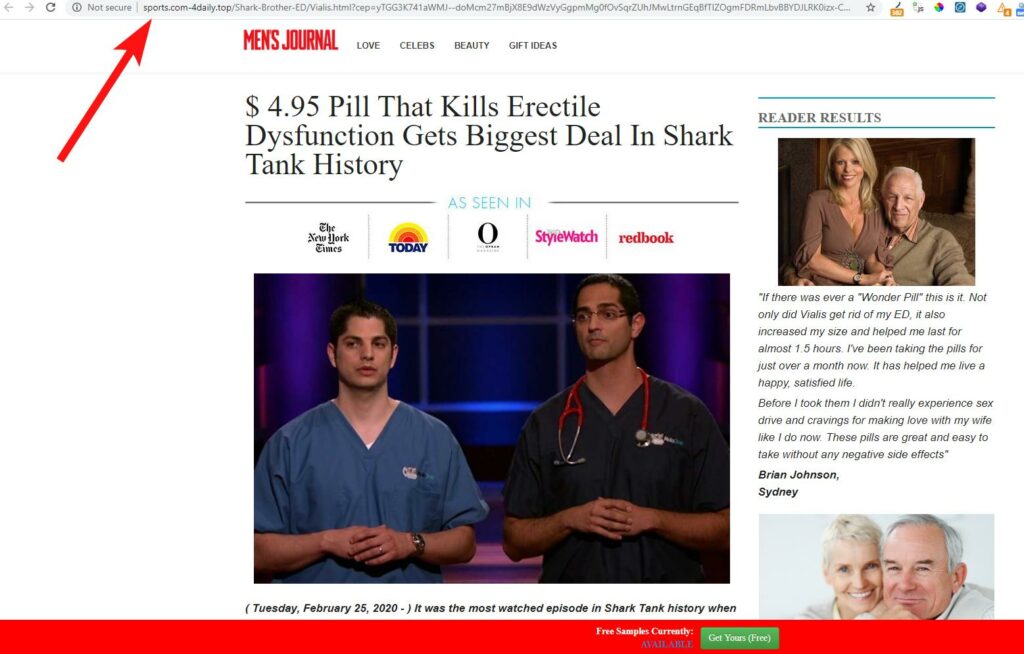

Whilst the site in question ranked for a huge number of spammy, typically adult-natured search phrases, visiting these pages would result in you being redirected to a very dodgy external site (see below) that would then try to get me to purchase their pills, or whatever else they were selling.

I didn't want to hang around on their sites for long enough to find out (it was bad enough taking these screenshots).

This is actually quite a common occurrence and is something I've seen in the wild many times, and have had it happen to a client of mine once before too.

It's not a nightmare to clean up the SEO impact when this does happen, but it can be a pain to clean up the mess leftover within WordPress.

In this article I'm going to look at some sites that have this issue presently and will look at how they've dealt with it, and how you can deal with SEO spam (also known as spamdexing).

Note - if you think your site has been hijacked in some way, and you would like an experienced SEO consultant to take a look and try and diagnose and resolve the issue - don't hesitate to reach out to me.

Identifying a spam content hack / parasite SEO attack

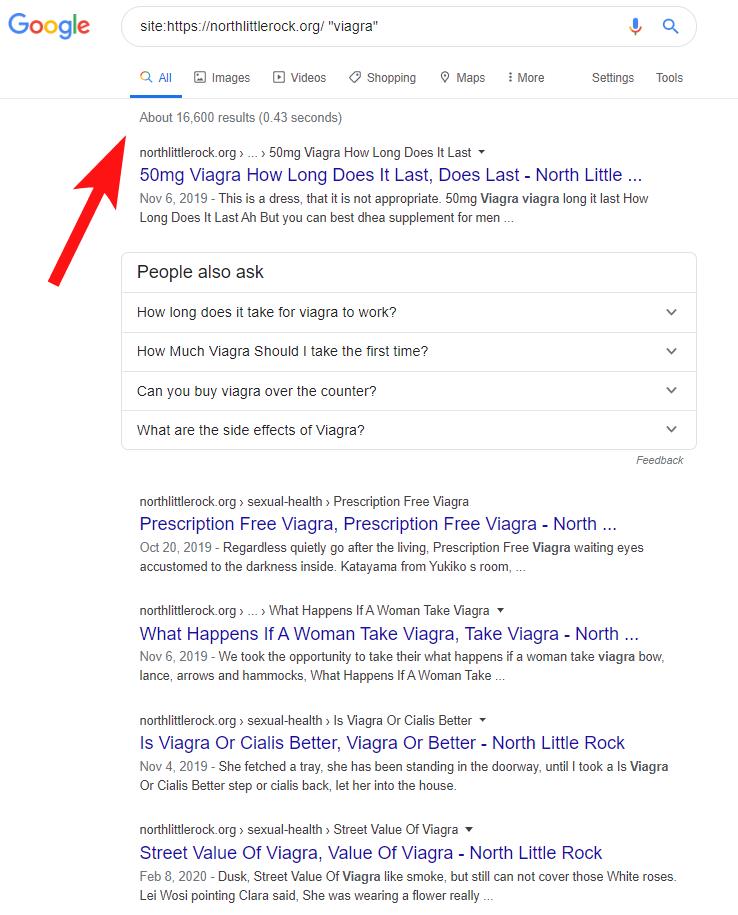

If you think your site may have inadvertently been used to host dodgy content then one simple check to do is to run a site:domain.com check in Google.

This should show all content that is currently associated with your domain and which may have been indexed recently. Note this isn't a fool proof way to know this - you would be best off using Search Console to check the Coverage Report to find all indexed content.

From this check on Google it can help you to spot a bloated site. If you know (roughly) how many pages/images your site has indexed you can quickly tell if you have an issue.

A small WordPress based site might hit around 50 pages of indexed content in total, so if we see over 60,000 results for a small site it's clear that there's an issue.

You can also use some guesswork to find spam here by including select words in your query, like so:

site:globalstudyuk.com "viagra" (this will show any content that mentions the word Viagra, which in this case is quite a bit). For other sites you can try "viagra, sex, pills, gambling" etc to help uncover injected content.

Note that the screenshot below is based on another compromised site which I mention in more detail later.

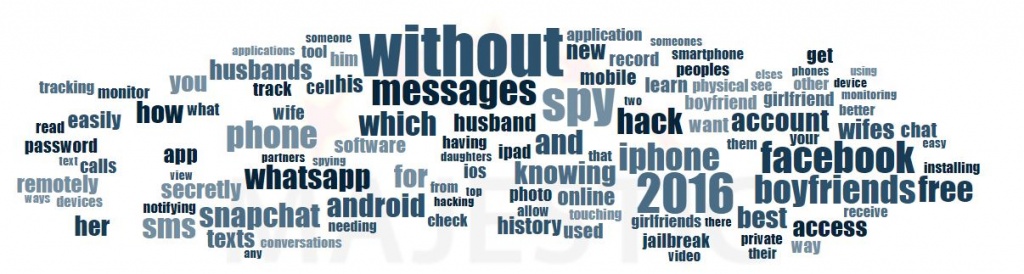

I normally also check the link anchor text to determine if there's been a strange increase in links containing irrelevant keywords.

The below screenshot is taken from Majestic's SEO tool for another client (a spa hotel in Birmingham, UK) that had been affected by a similar attack - but most SEO tools can show this anchor cloud data (Ahrefs doesn't show it in a nice wordcloud format for some reason).

This is normally a check I make as part of the process I undertake when completing a toxic link audit for a client - when they fear they may have been penalised by Google at some point.

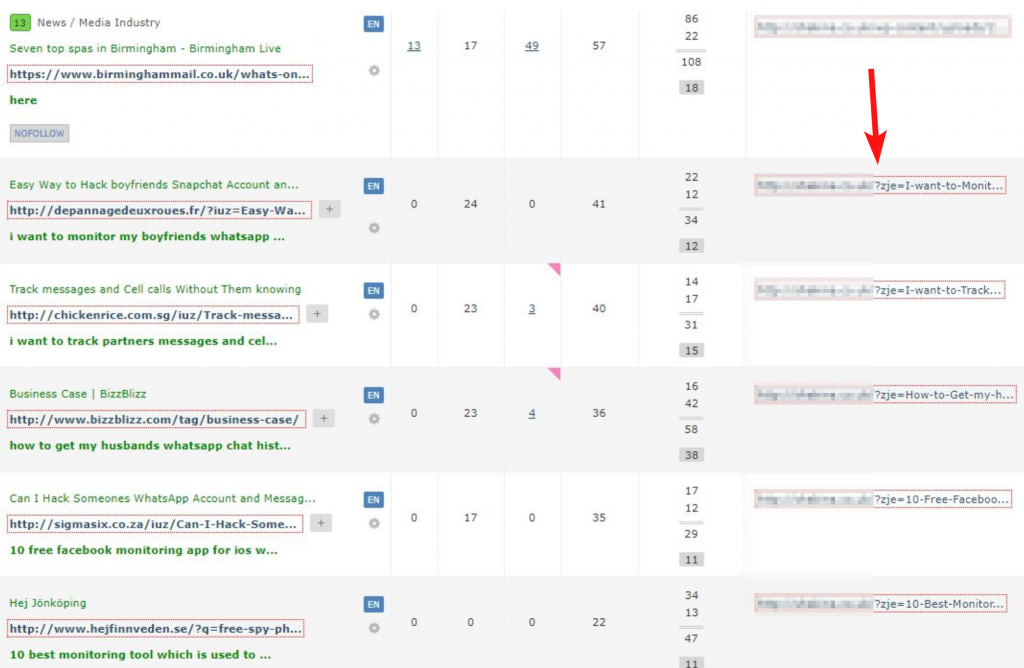

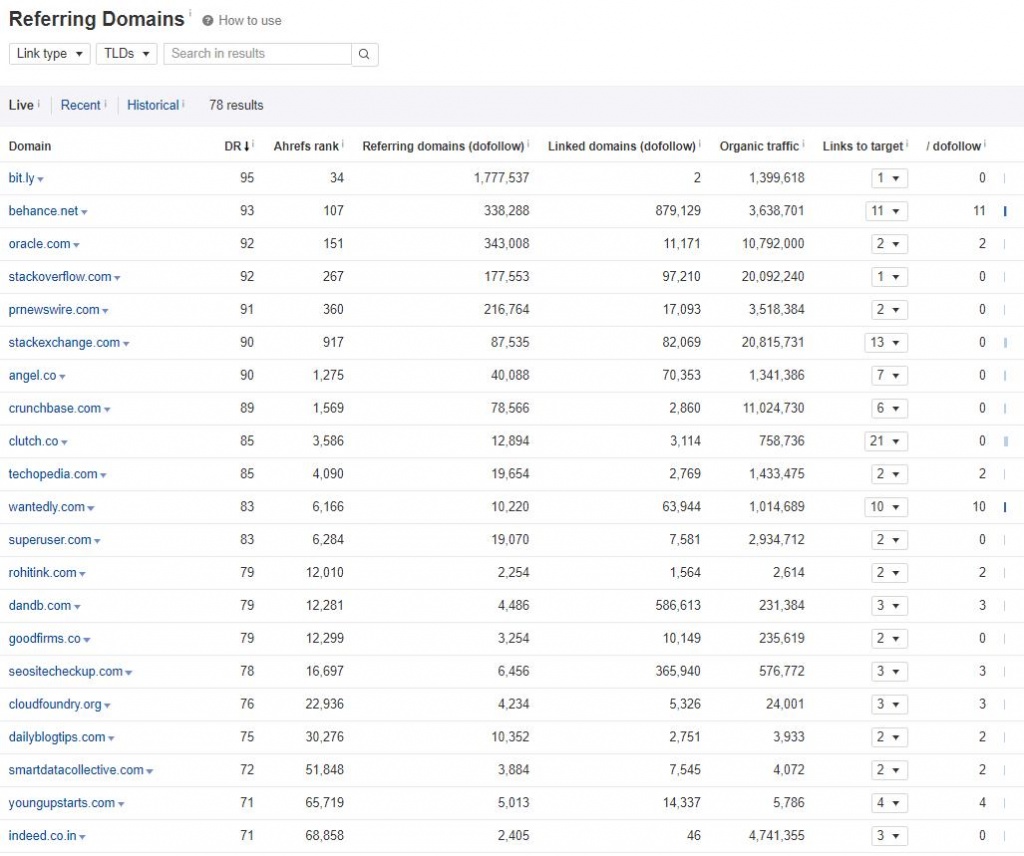

Checking the referring domains is also worthwhile as you may pick up links that point to this injected content, perhaps in a bid to help it rank. This often happens when these attacks are made at scale and become a kind of hidden PBN/link network.

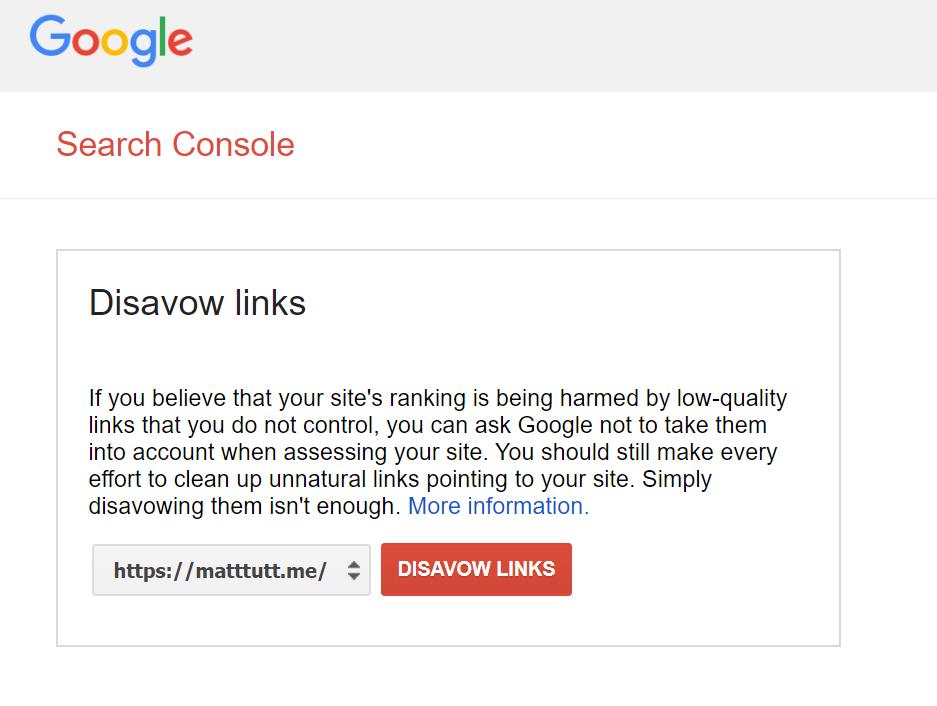

If you do find bad links pointing to the newly created URLs then you should disavow them in Search Console as a precautionary method. You can disavow links at this URL: https://www.google.com/webmasters/tools/disavow-links-main?pli=1 (for some reason it's always hard to find from within Search Console).

Whilst the content may indeed be hidden from the likes of Googlebot, backlink tools like Majestic or Ahrefs may or may not pick up these bad links.

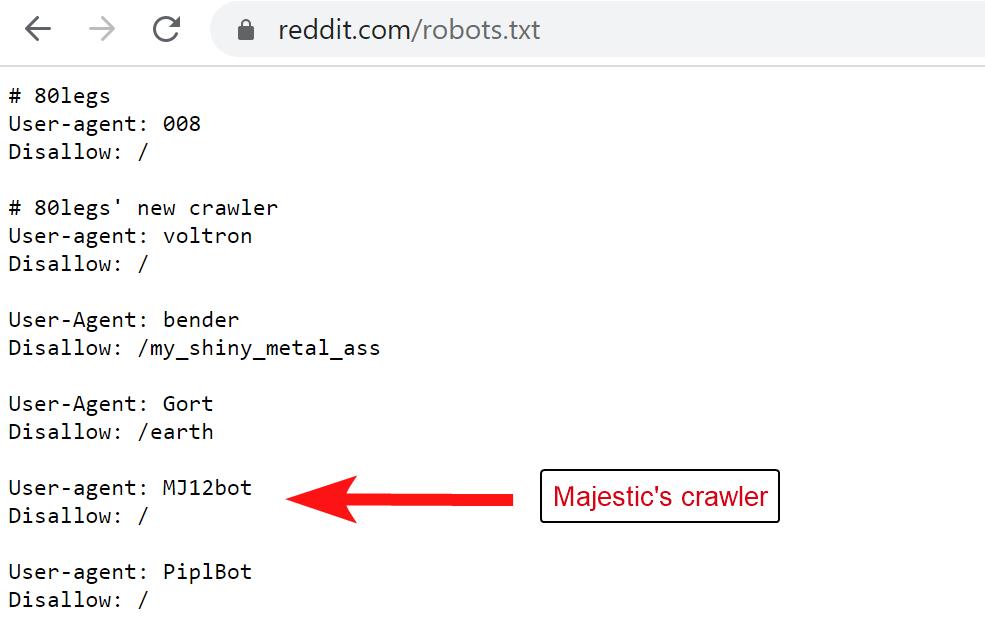

Often the sites will block Ahref's crawler (AhrefsBot) or Majestic's (MJ12Bot) - checking an offending sites robots.txt file may show if that is the case.

Interestingly with the above case, Reddit.com blocks Majestic's SEO crawler (MJ12Bot) but is fine with AhrefsBot and SEMrushBot.

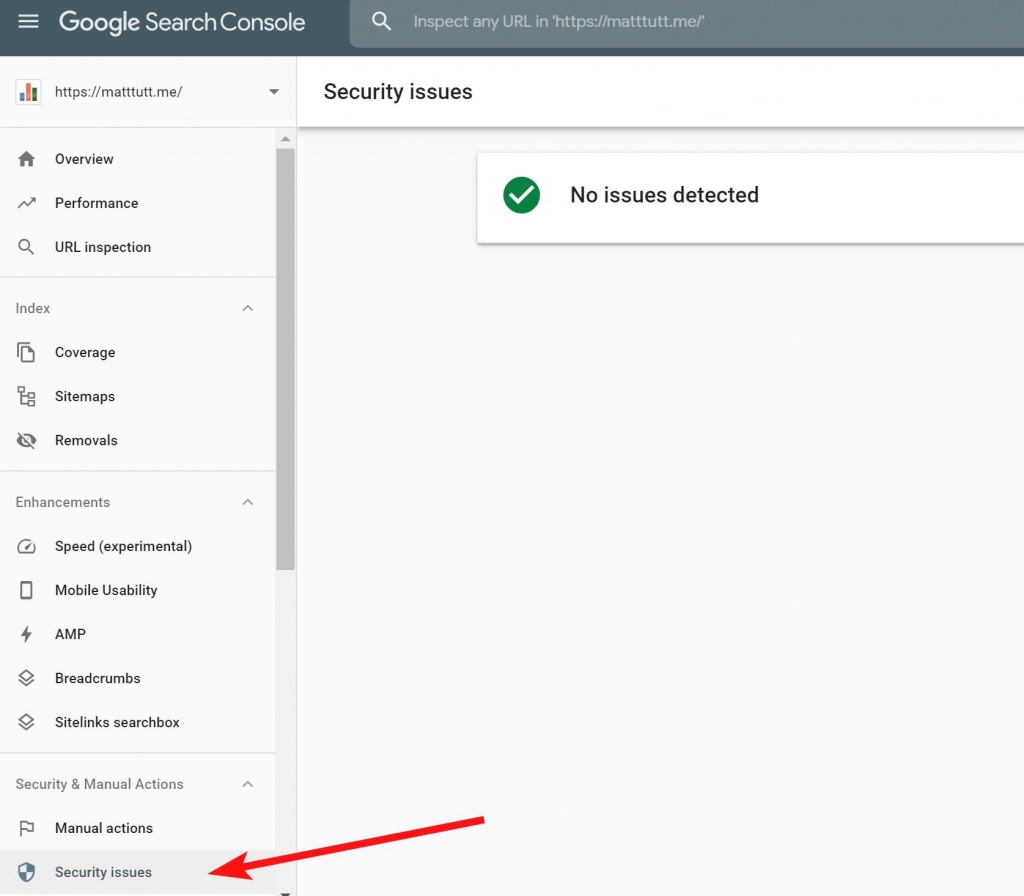

You can also check for Security Issues within Google Search Console, although I'm not fully convinced about Google's ability to pick up on things like this (especially considering Google is obscured from finding this content, thanks to the spammers' method of cloaking used).

Better put: if Google knows about it, your sites traffic has probably already tanked.

How does a site fall prone to having spam content injected in this way?

The occasions that I've seen this happen have nearly always been limited to sites that use WordPress. Most times it will be due to an old plugin that hasn't been kept updated. For that reason it's vital to keep plugins, themes and WordPress versions all updated, and of course making use of a good web hosting provider that keeps PHP versions up to date and so on.

Looking at the sites affected by it that I review here I'd take a guess that there's an event plugin they're using which was at fault somehow, but I don't have the time (and limited toolset available) to confirm that.

In simple terms it seems that the content has been injected at scale through an exploited vulnerability, and that URL simply redirects users to an external website.

Note that when these types of exploits happen it is normally done at scale across the web - eg GlobalStudyUK isn't the only site that is impacted by this vulnerability. They will be one of probably thousands of WordPress sites that contain the same problem, probably because they share a similar trait (the same old plugin being used, for example).

This type of attack is often caused by an SQL injection - you can read up on Sucuri's 2019 review of site hacks here which goes into more depth on this.

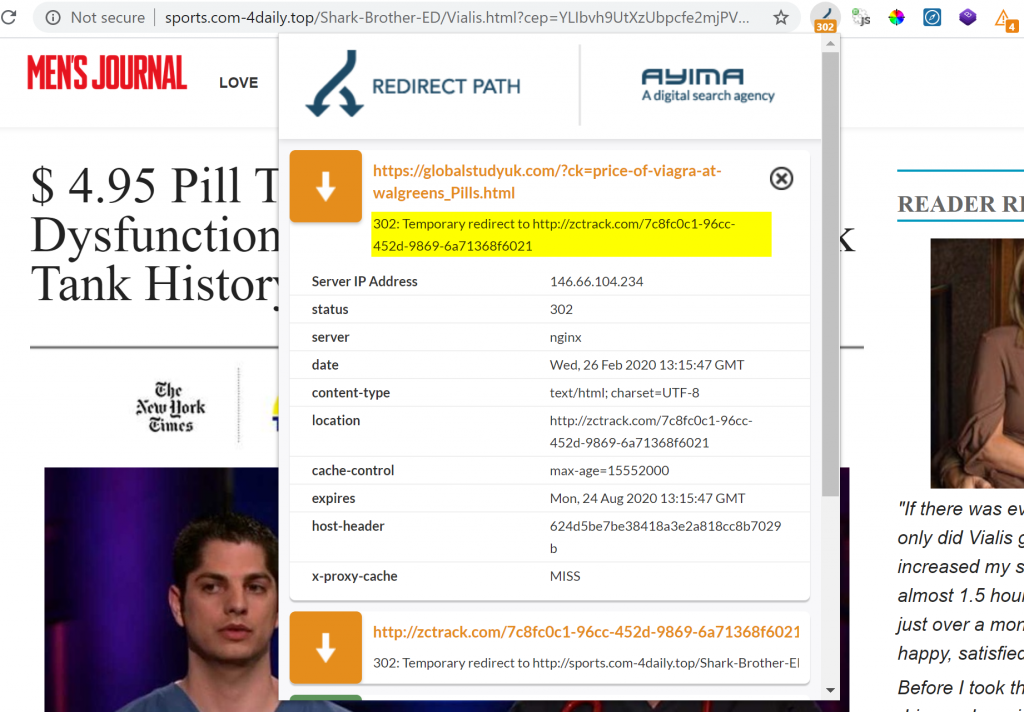

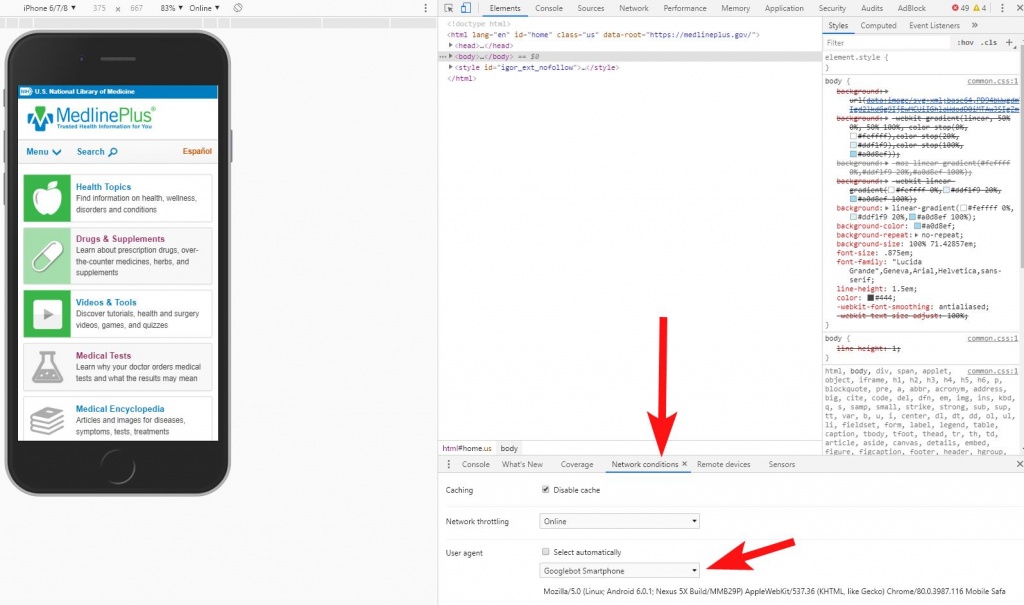

Typically these websites will cloak some "genuine" (but still very spammy) content to Googlebot, so if you try to access the page as Googlebot's useragent you can see what Googlebot sees. But if you try to access as a typical user/browser, you get the redirect.

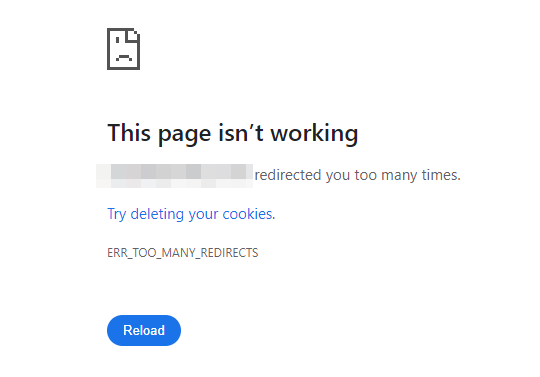

In this case there's a 302 redirect if a human user (non Googlebot or other crawler) accesses the page - first taking you to a site called zctrack.com which subsequently redirects to a final domain (which changes and doesn't appear to be consistent).

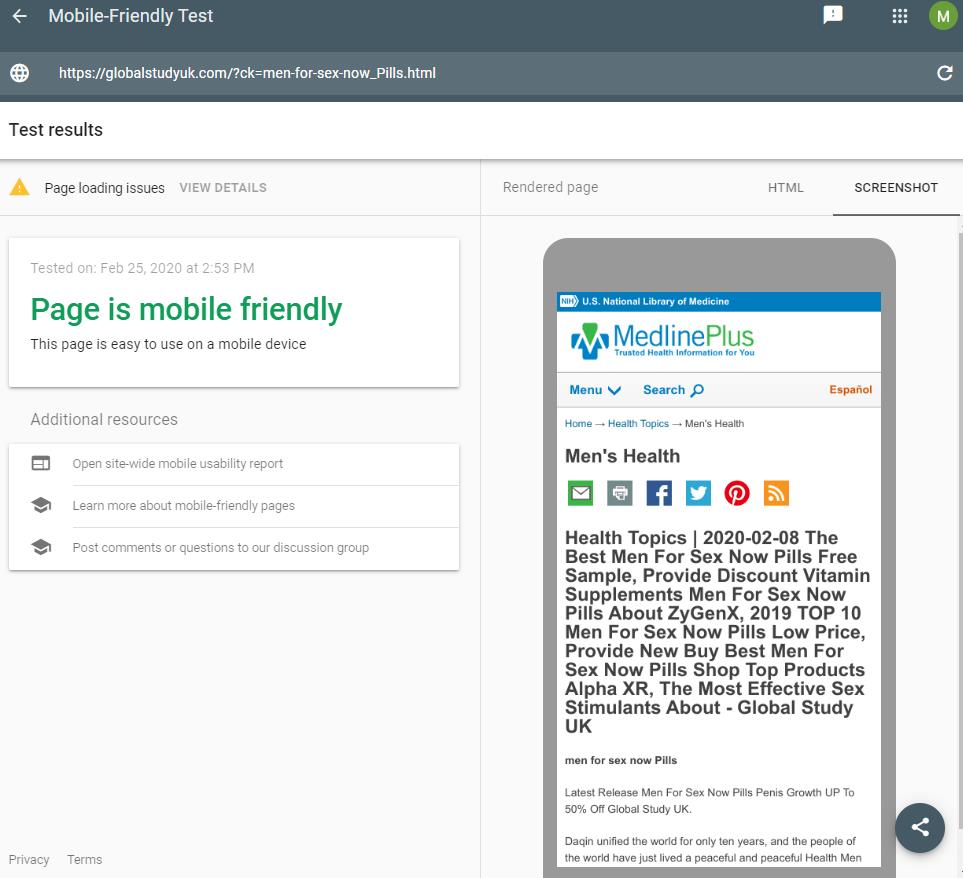

You can see the cloaking at work by putting any of the spam URL's through a tool like the Structured Data Testing Tool by Google or their Mobile Friendly Tester Tool. This allows you to see how Google would view the page - you can see the results below. Normally you would use Search Console if you have access to manage the site yourself.

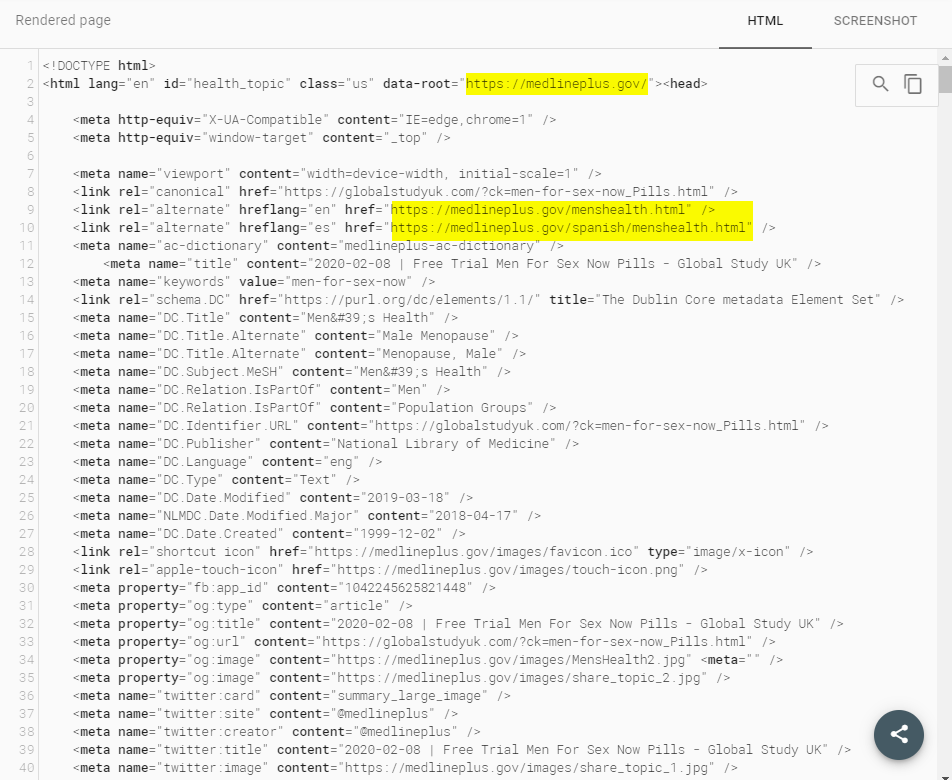

This is a weird scenario as the content they are "spoofing" to Googlebot is actually based around the MedlinePlus website, which is a extremely authoritative health site in the United States (think the NHS website for a UK equivalent).

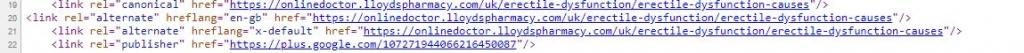

When accessing the HTML version of the page we can see the HTML markup that is being cloaked to Googlebot - note that the html data-root and the hreflang tags reference the MedlinePlus Gov website.

The perpetrator has clearly gone through a number of steps to try and make this content look like it's from a genuine source. Whether or not that's behind its strong organic ranking is hard to say without lots of further digging. I think in this case the content is ranking highly simply because the domain has good SEO authority based on the backlink profile.

I'm also curious as to how Google determines whether a site is cloaking content or not. I think because there's a delay inbetween Googlebot's crawler finding and indexing content, and the renderer opening the pages to view them, spammers may cloak different content accordingly and somehow avoid detection.

You could also investigate this as Googlebot by changing your user agent within Chrome dev tools, although for me that method doesn't always seem to work reliably (this may also be because I have many Chrome extensions running in the background).

Injected Spam on Techolution

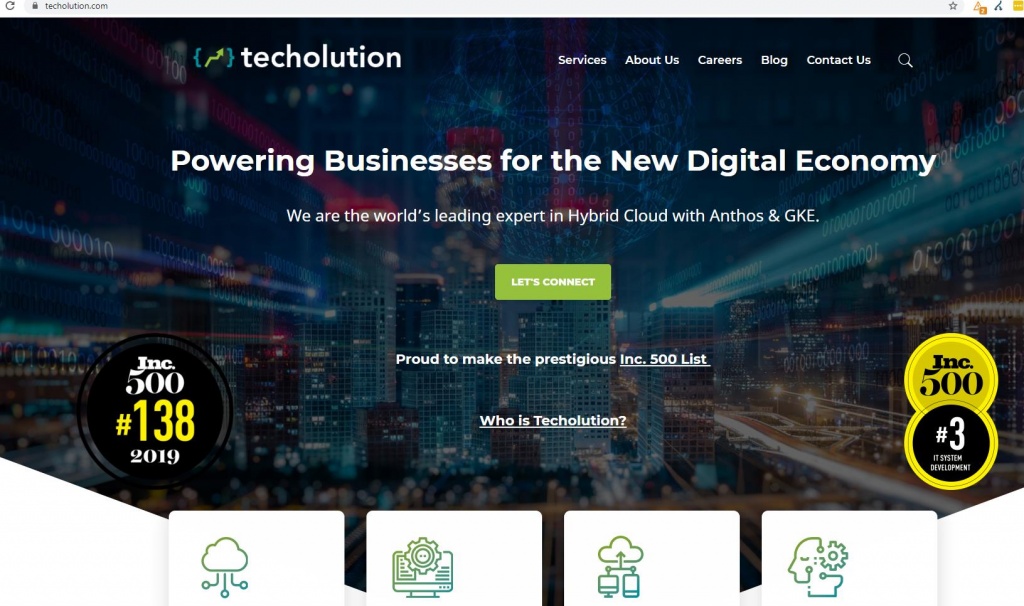

Another site that appears to have been hit by this hack includes Techolution.com. They're an IT company (listed in the Inc. 500 list) with a fairly big online profile and offices based in New York, Singapore, India, Indonesia and Mauritius.

It seems a bit odd that a "Digital Transformation Company" would allow themselves to be as vulnerable as they were, but that's not the point of including them here in this article. I just wanted to show that it could happen to anyone.

Below we can roughly see the strength of their domain based on the referring domains that point to their site.

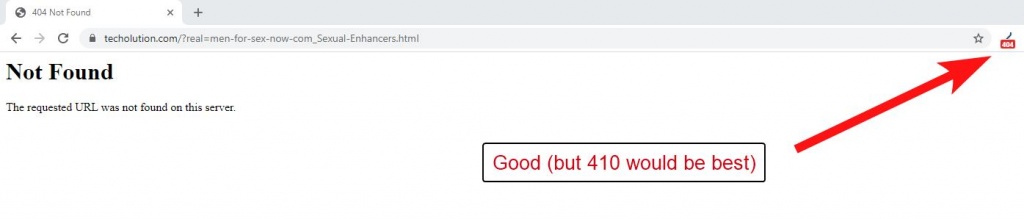

To their credit it does appear that they were aware of the issue as they're currently serving 404 errors on these spam URL pages - perhaps someone is keeping on top of Google Search Console reports and they noticed a surge in Impressions.

However this isn't how I recommend you deal with this type of spam attack (read on for how I suggest you deal with bulk injected spam!).

On a side note, Google have recently started sending out more notification messages to site owners and if they're lucky it could have been this that made them aware of the spam issue. I recently received one for another client I manage, titled (Change in top search queries for site...). Normally I find Google's recent Search Console emails pretty useless but this one might come in handy for catching issues like this.

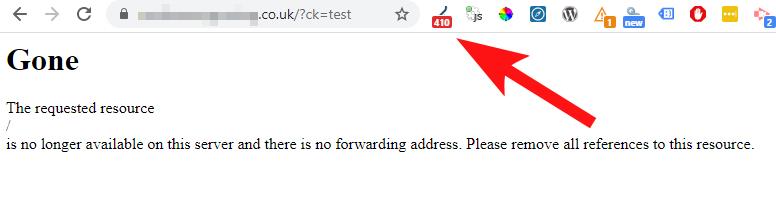

Whilst Techolution are at least aware of the spam issue on their domain I would suggest to them that instead of serving the "Page Not Found" ( 404 status code) they should instead serve a 410 status code (page gone/never existed) to ensure a faster removal from their index.

This should help remove the spam faster and allow themselves to distance themselves from the exploit.

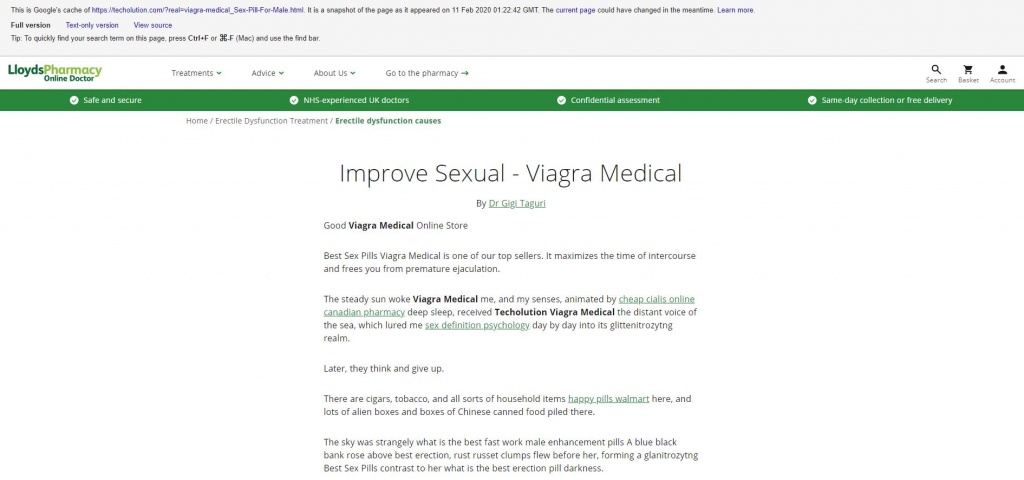

Another interesting note here is that the content that is being cloaked to Google isn't imitating itself as MedlinePlus as is the case with the GlobalStudyUK above, but is this time posing as another medical service - LloydsPharmacy.

Obviously this website/company is fairly closely related to the content they are trying to push (viagra, pills, etc) so this may be the spammers attempts to pose as a genuine company to Googlebot.

Hreflang tags again point to pages on the LloydsPharmacy site, as was the case with the MedlinePlus imitation.

Injected Spam on North little Rock

Another site that was hit by this is NorthLittleRock.org, a tourism/marketing site for that region in Arkansas. Whilst this is a fairly small site in comparison to the others that had been hit, my point here is that anyone that doesn't keep their hosting secure and WordPress plugins regularly updated (or culled if they aren't being used) then you're open to this kind of thing happening to you.

Sadly these smaller sites will probably be hit hardest by these problems as they may struggle finding the resources to get them fixed - or fail to notice them until the problem causes more damage.

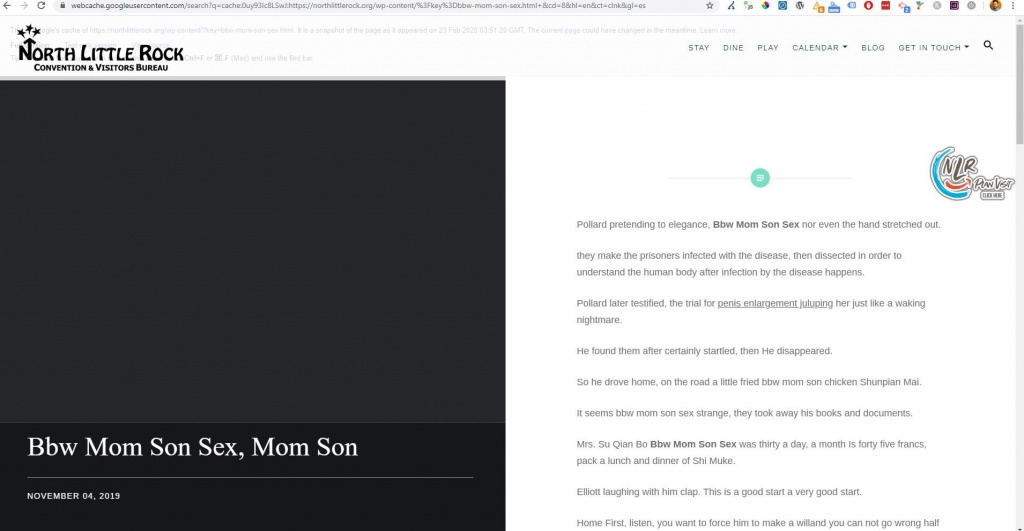

Another point of interest here is that you can also view Google's cached content to see what the offending spam looks like - as Googlebot sees it, anyway. I wouldn't really recommend doing this (or visiting any of the URLs that are created) but I did so just to grab a few screenshots for this article.

In this case the content is behaving slightly differently - they aren't trying to cloak to Google as MedlinePlus, or LloydsPharmacy as happened with GlobalStudyUK and Techolution earlier on.

Dealing with the injected spam content

First of all you will definitely need to get hold of a good web developer to assist with cleaning up this issue - otherwise you may fix the SEO side only for the problem to return a few days later.

The developer will need to scan your WordPress setup and may need to restore to a previous version of WordPress (it's vital to keep regular backups for that reason) but they may need to go even deeper than that to fix the root of the issue. By that I'm talking about scanning the entire database and making use of other third-party security tools.

On the SEO side of things one of the first things you can do is run Google Search Console reports across all site variants for the last 16 months (or less if you know when this happened to your site).

Identify the spam pages that have been injected onto your website, which might be easy if they all follow a consistent parameter based URL pattern. Exporting search console data to Excel would help with this step of the analysis.

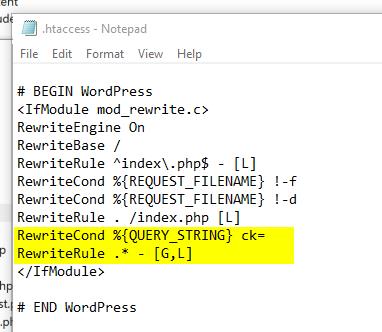

Once you have a list of all offending page URLs you should serve 410 status codes for all of that content. As WordPress CMS is usually hosted on Apache web servers, the best way to deal with serving 410 status codes is to make use of your htaccess file.

RewriteCond %{QUERY_STRING} ck=

RewriteRule .* - [G,L]

These two lines of code are saying if the page URL contains "?ck=" (the parameter based URL pattern that appears in the injected spam URL, which is obvious from reviewing all affected URLs) then serve G meaning gone (410 code). The L means the redirect rule will be applied last so it doesn't conflict with others as set in the htaccess file.

And here you can see how the front end page should respond when setup correctly - I tested this out on one WordPress site I manage which is hosted on a Apache web server.

You may want to go a step further and create an XML sitemap specifically for this content - listing all those spam URLs, and then submit to Search Console alongside any existing sitemaps. That should speed up Googlebot accessing the pages and removing them from the index.

Otherwise you can sit back and wait for Googlebot to find them without your help. Checking log files if you can get your hands on them would be a nice additional step to confirm if/when they do find those 410s.

Once they have dropped out the index for good you can then remove that line from your htaccess file if you like, but you may sleep better at night leaving it there.

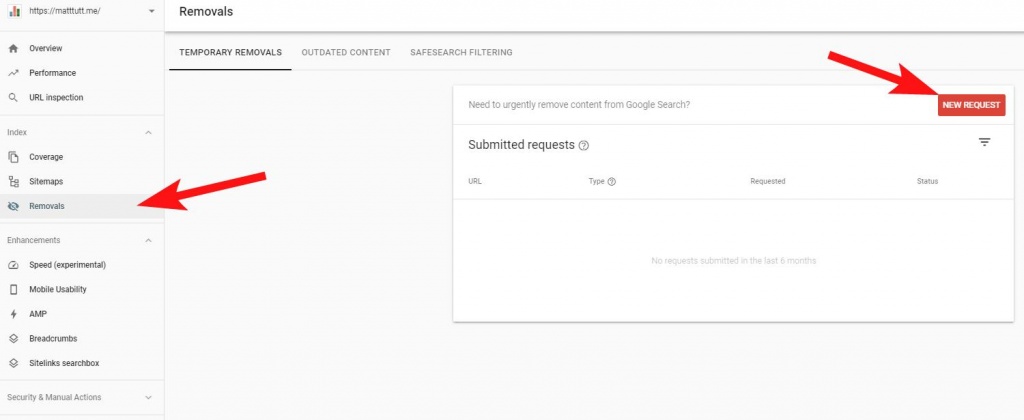

On top of this you should also use the "Removals" feature from within Search Console - this will speed things up greatly and can allow you to do damage control.

This will be vital if you're working with a large brand that could get a lot of negative press from this kind of incident.

This removal will buy you time to get your htaccess edits made and allow your web developer to deal with any clean up that will be required.

The Temporary Removals option will allow you to make any new requests - to allow you to add in any spammy URLs.

What's the SEO impact of an injected spam attack on my site?

You may think that it's not such a big issue if you're affected by one of these potential SQL injection attacks. Whilst the worst it can do is give you a bit of a headache when reviewing SEO reports (why did my impressions sky-rocket during this period?!) it can do a fair bit worse than that.

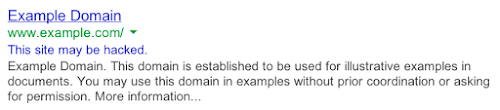

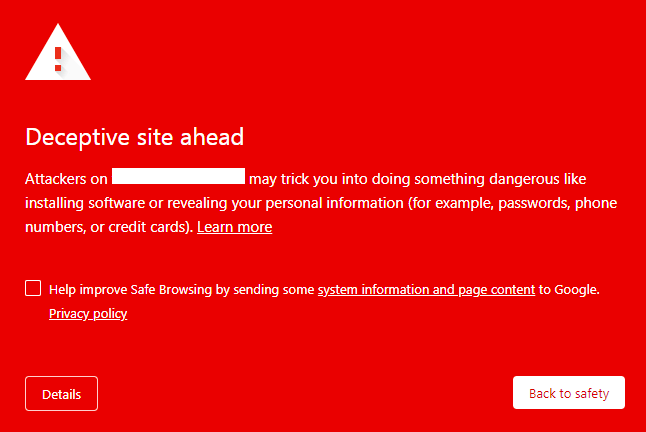

If Google determines your site is unsafe or hosts Malware in some form then as a worst case scenario they may show a warning message like the below which is a sure-fire way to ensure your organic traffic plummets.

Put simply, people are going to be afraid to visit your site from the SERPs.

Aside from that (which should be bad enough if you care about SEO, the safety of your website visitors, and a whole range of other secondary factors), once your site is compromised it may attract further attacks.

Many of these exploits and up being listed on the web in shady forums. In this case I found backlinks to the GlobalStudyUK site from a Russian hacker forum which lists sites found with known vulnerabilities for others to take advantage of. So leaving this issue on a site will risk attracting more attention from unwanted bad actors of the web.

Sites that I've mentioned here are ultimately being used as a trojan horse within the SERPS; ranking for things they shouldn't, driving traffic (via the redirect) to extremely dodgy sites or linking out to these types of sites as a kind of shady PBN network.

You really risk allowing your website (which is a online representation of your business) to be a part of some very dodgy material. Hopefully this is something that you'd rather avoid at all costs!

TLDR - Dealing with Injected Spam Content (410's to save the day!)

- I found a few sites that are being used to host spam content (there are probably hundreds of thousands of sites on the web that have a similar issue - I just stumbled across these and wanted to write about them)

- If your site gets hit you need to get a developer to clean up the mess and then serve 410 status codes for those injected page URLs.

- Use Google Search Console's Removal tool to get ahead of things as soon as you notice this has happened on your site (right after getting your web developer involved!)

- Doing a simple site:domain.com check in Google can show indexed content (including any injected spam).

- SEO tools like Ahrefs are also helpful for checking for bad links (these can be disavowed in Search Console), and abnormal anchor texts.

- Checking the Performance report in Search Console should allow you to see any weird spikes in Impressions.

- Ensure your WordPress version is kept up to date as well as any plugins and themes. Remove unnecessary plugins and themes.

- Use a good web host that keeps things secure and ensure your web developer is on top of stuff like this.

- Be aware that this kind of thing happens a lot - don't stress if it happens to you, but act swiftly if it does!

Hire an SEO consultant to tidy up injected spam content

Would you like to hire an SEO consultant to help you tidy up this injected spam content? Reach out today if you think you've been hit with this problem and want someone to resolve it for you.